Switch upgrades were done this morning, and something has gone wrong with them. Networking engineers are working on restoring full service.

ipv6 access to some of our services is impacted, along with possible slowdowns in other services.

Switch upgrades were done this morning, and something has gone wrong with them. Networking engineers are working on restoring full service.

ipv6 access to some of our services is impacted, along with possible slowdowns in other services.

Some time has passed since I've tightened TLS settings on my home server. Let's move it a notch higher, this time including home k3s cluster.

In 2026, using elliptic curves cryptography certificates should be the norm. Fortunately, automatically obtaining them is easy. I'm using cert-manager for kubernetes ingresses. Switching to ECC is a just a matter of adding an algorithm annotation on Ingress:

annotations: cert-manager.io/cluster-issuer: "zerossl-production" cert-manager.io/private-key-algorithm: "ECDSA"

and removing the secret containing old cert and key.

Small caveat: FreeIPA still lags. While it supports ACME protocol, ECC through it is not possible, yet. I've left my internal domains with RSA certificates.

For the main server, I refresh certificates using small Ruby script. I had the change RSA.new(3072) to an EC key generation, rest happened automatically:

Last time I've limited support of Transport Layer Security to versions 1.2 and 1.3. Today, let's allow the latest only. I don't care about supporting Windows 7-era clients (years out of support).

Ingress on k3s is handled by Traefik. Simplest way to influence its config is by creating a global (named default) TLS

configuration option:

apiVersion: traefik.io/v1alpha1 kind: TLSOption metadata: name: default namespace: kube-system spec: minVersion: VersionTLS13

That's all!

Change to nginx configuration on the main server is minimal, too. Version 1.2 is removed from the list, leaving only 1.3:

I have to tune Postfix and few other services TLS settings later.

065/100 of #100DaysToOffload

Flock to Fedora is more than a conference – it’s where the Fedora community comes alive. As part of the In the CommitHistory campaign, we sat down with confirmed Flock 2026 speakers to hear their stories: what brought them to Fedora, what Flock means to them personally, and what they’re hoping for in Prague this June. This is one of those conversations.

Jaroslav Reznik has been part of Fedora longer than most people remember. This goes all the way back to Red Hat Linux 5, before Fedora was even known as Fedora. After a brief detour to another distro, he joined the KDE SIG days and went on to build a long career in Red Hat’s Program Management team. But it was the EU Cyber Resilience Act (CRA) that brought him back to the Fedora community.

The moment that changed everything? A scene at FUDCon North America in 2009 watching Fedora’s Program Manager command what looked like a sci-fi control room, scheduling Fedora 13. Jaroslav looked at that and thought: I want that job. Years later, he got it.

On the CRA, Jaroslav is clear and passionate. The regulation is the first to formally acknowledge the existence of open source software in legislation. Thanks to an enormous community effort, it’s actually open-source friendly. Non-commercialised community projects are fully exempt. For a project like Fedora, the concept of open-source stewards formally recognised in the regulation opens up a powerful new model for governance.

The program management team is working to build a stewardship governance model around Fedora. They are making it a welcoming place for anyone who wants to support the project. They are clear about what stewardship should and shouldn’t be: it’s not about monetizing open source or adding burdens, it’s about helping the community raise the bar for security together.

Flock holds a special place for Jaroslav; he co-organised the very first Flock held in Prague back in 2014. Now, more than ten years later, he is returning to Prague for Flock 2026 with Roman Zhukov to not only talk about the CRA but run a hands-on workshop on it. His message is simple: you can’t do things from behind a desk.

Flock to Fedora 2026 takes place June 14–16 in Prague. Registration is at capacity but you can join the waitlist. Can’t make it in person? Follow along live on the Fedora YouTube channel.We hope to see you there!

Note: AI (Google Gemini) was used in drafting this article. The content was reviewed and verified before publishing.

I have been appreciating YOASOBI's work for quite a while now. One of the reasons why I chose Oshi No Ko (2023)'s pilot episode as a means to end my long anime media hiatus was because their popular single and the anime series' opening music, Idol, also featured in one of my favourite videogames, Forza Horizon 6 (2026). Reading into the song's lyrics only made me further intrigued to understand just what the show was all about, given how this feature song was purposely composed for this anime series. My upcoming two hour long flight meant that I would end up having nothing but time to delve deeper into uncovering the darker secrets, all while keeping the boredom at bay by watching the show.

Let me preface this with a disclaimer - I do not write reviews. Or at least, I do not write them until I feel compelled to do so. When I found myself calling up my friend right after landing from my Indigo flight, I realized this was something I would want to talk about. For someone who had sworn off anime media for almost over a couple of years now, I had little reason to return - let alone watch something that was already three years old. With the anime series itself getting refreshed for the fourth (and final) season, I could have watched something else. But for some reason, I stuck to Oshi No Ko (2023)'s pilot episode to keep me entertained on my flight from Pune to Kolkata, and boy, am I glad that I did that.

The oddly long pilot episode wastes no time throwing you into the deep end. Whether it is about finding hope amidst overriding mediocrity (when it came to Gorou Amamiya), seeking life amidst impending despondency (when it came to Sarina Tendouji), or pursuing belonging amidst surrounding insincerity (when it came to Ai Hoshino) - everyone had their motivations. Also, unlike contemporary anime storytelling, the pilot episode was filled with tonal shifts, transitioning the emotional atmosphere from inescapable despair to heartwarming wholesomeness, and from whimsical fantasies to grounded realities. Along with tight pacing, the fifty eight minute long episode had absolutely zero dull moments.

You know you have struck gold when you find yourself rooting for the side characters too. There was something charming about the likes of Miyako Saitou and Ichigo Saitou, with their personal approach towards nurturing the main characters all while battling their own demons in their desperate struggle. I felt recognized when the narrative trusted my viewing judgement - both when Taishi Gotanda elaborated on the dire state of the show business and when Kana Arima discovered her acting rival during the movie shooting. Every now and then, my inexperience in Japanese forced me to pause playback to take it all in, but the pilot episode had its own ways of rewarding attentive viewers with crucial details.

Their (subjectively appealing) stellar artstyle only ended up checking the remaining boxes for me. The evolving portrayals of Ruby Hoshino and Aqua Hoshino, both in their childish moments and precocious dealings, played a major part in establishing them as the bonafide protagonists of the anime series. From the (rather overused) cherry blossoms of the countryside to the (gloomily overcast) concrete jungle of Tokyo, Doga Kobo pulled no punches in ensuring that I moved into their narrative world the moment I pressed the play button. The (restrictively exceptional) environmental storytelling had social media, small talks, crowd discussions, and inner monologues - and honestly, I could not ask for more.

An Indigo flight changing altitudes is perhaps the worst place to get emotionally shaken, given how you would not want to have a huge lump in your throat all while struggling with blocked ears. If you are a chronic crier, do not watch the pilot episode in public unless you like getting emotionally overwhelmed amidst strangers. Trust me when I say this - the amazing narrative found multiple ways to pull me in by the heartstrings, and even I found my tears welling up in a couple of scenes. I felt weirdly familiar with and equally alienated by the mysterious actions of Ryousuke Sugano, which left me with more questions than answers. If that is what the pilot episode set out to achieve, they sure as heck succeeded.

For a Comic Con hobbyist pilgrim making a prodigal return to anime media, there could not have been a better welcome for me. With the pilot episode covering the first couple of decades of (painstakingly detailed) storytelling progression in its narrative world, Oshi No Ko (2023) has a reliable foundation to build its story forward. It really did not make a difference to me that the series themes of show business and divine reincarnation are at odds with each other. I honestly did not care that the pilot episode had been spoiled for me about three years back, or that the anime series did not have an (absolutely logical) match with the Seinen demographic. All I wanted was the fun factor and I have had my fair share.

The more I write about Oshi No Ko (2023)'s pilot episode - I am feeling like - the further I stray from writing a review and the closer I come to giving a recommendation. But as the (unfortunately shortened) Idol playback drops at the end of the pilot episode, I realized that that is what reviews are supposed to be – a compass that drives you away from poorly made anime media and closer to the good ones. And I do not state this lightly – the pilot episode has (most definitely) been among the best ones. I am already itching to see how the story progresses with Ruby and Aqua at the helm. Maybe I will get to do just that after I finish the Winter season's Festival Playlist in Forza Horizon 6 (2026), and so should you.

We, the Forge team, recently onboarded a Codeberg-hosted repo to the new Fedora Konflux instance.

This is a guide based on the onboarding experience, the steps and UI are similar in Fedora’s Forge.

oc login): https://api.kflux-fedora-01.84db.p1.openshiftapps.com:6443Konflux configuration is managed through GitOps in the

tenants-config

repo on GitLab. The UI is intended to be read-only — you should do everything through merge requests.

Follow the instructions in the

tenants-config repo:

create-tenant-resources playbook. It generates the namespace, RBACYou end up with three files:

ns.yaml — the namespace with a konflux-ci.dev/type: tenant labelrbac.yaml — a RoleBinding granting konflux-admin-user-actions to your FASkustomization.yaml — ties together the quota, RBAC, namespace and yourThen run the update-tenant-apps playbook to generate an ArgoCD application

manifest per tenant directory and update the ArgoCD kustomization.

This is where you tell Konflux what to build. We went with a Kustomize

Configuration-as-Code setup with three layers:

git-provider: forgejo, git-provider-url: https://codeberg.org),build.appstudio.openshift.io/request: configure-pacOpen a merge request with everything

Example MR.

Each Component needs a matching ImageRepository CR. If you don’t have one, the image controller

never provisions a Quay repo, spec.containerImage stays empty on the

Component, and the build service just sits there waiting. No webhook, no PaC PR,

nothing happens.

Example ImageRepository:

apiVersion: appstudio.redhat.com/v1alpha1

kind: ImageRepository

metadata:

name: forge-rawhide-production

namespace: fedora-infra-tenant

annotations:

image-controller.appstudio.redhat.com/update-component-image: "true"

labels:

appstudio.redhat.com/application: forge-production

appstudio.redhat.com/component: forge-rawhide-production

spec:

image:

name: fedora-infra-tenant/forge-rawhide-production

visibility: publicThe update-component-image: "true" annotation is what tells the image

controller to write the Quay URL back to spec.containerImage on the Component.

Example MR. Do not merge yet.

Konflux needs a secret to authenticate with your Forgejo/Codeberg instance:

oc create secret generic pipelines-as-code-codeberg

-n {namespace}

--type=kubernetes.io/basic-auth

--from-literal=password={FORGEJO_TOKEN}

oc label secret pipelines-as-code-codeberg -n {namespace}

appstudio.redhat.com/credentials=scm

appstudio.redhat.com/scm.host=codeberg.orgThe Konflux docs

say you need these token scopes:

Don’t restrict the token to a specific repo — scopes like write:user aren’t

available with repo-scoped tokens on Forgejo. If you set the right scopes and

it still complains about insufficient permissions, try a token with everything

enabled.

With the secret in place, merge your MR. ArgoCD picks it up and syncs the

resources. Wait a few minutes, then check:

# Did containerImage get set?

oc get components -n {namespace}

-o custom-columns='NAME:.metadata.name,IMAGE:.spec.containerImage'

# Are the ImageRepositories ready?

oc get imagerepositories -n {namespace}

-o custom-columns='NAME:.metadata.name,STATE:.status.state'

# What does the PaC status say?

oc get components -n {namespace}

-o custom-columns='NAME:.metadata.name,STATUS:.metadata.annotations.build.appstudio.openshift.io/status'If spec.containerImage is filled in and the status shows "state":"enabled",

you’re good.

At this point Konflux opens PRs on your source repo with auto-generated Tekton

pipeline files in .tekton/. Two ways to go:

.tekton/ files,output-image pointing to quay.io/redhat-user-workloads/{namespace}/{component}@sha256:... refs from theserviceAccountName: build-pipeline-{component}We went with the second option. We already had pipelines with custom version

tagging that we wanted to keep, so we pulled in the new task bundles and labels

from the generated files and left the rest alone.

The configure-pac annotation gets consumed on the first attempt. If it fails

(token issue, rate limit, whatever), you need to re-add it:

# One component

oc annotate component {component} -n {namespace}

build.appstudio.openshift.io/request=configure-pac --overwrite

# All of them

for comp in $(oc get components -n {namespace} -o name); do

oc annotate $comp -n {namespace}

build.appstudio.openshift.io/request=configure-pac --overwrite

doneTo sum it up, we created a tenant on Konflux-ci cluster, created applications and components and set a place where the images would be hosted. At the event of push to the codeberg repo main branch – the repo where we store the Forge Containerfiles, the pipeline gets triggered (scoped by the on-cel-expression to only those contexts where the change happened) and a fresh and tagged image appears on quay, ready for further testing and deployment.

Thanks to the Konflux Team for the Forgejo support!

The post Onboarding a Forgejo-hosted project to Fedora Konflux appeared first on Fedora Community Blog.

Petr Boy came to Fedora documentation the way many contributors do, by seeing a gap and deciding to fill it. As a researcher, writing is his daily work. When he looked at how he could meaningfully contribute to Fedora, documentation was the obvious answer. He started with Fedora Core 1, stepped away, and returned in 2020 when both the Server Working Group and the Docs Team were being revitalised at the same time. Since then, his focus has been on the “bigger-picture” content structure, readability, consistency, and inspiring others to get involved.

His first Flock was in Cork, Ireland in 2023, and what struck him most was the collaborative approach combined with open, structured dialogue and the sheer range of personalities all genuinely trying to get to know each other.

For a team like Docs, where so much depends on shared standards and careful communication, Petr sees Flock as irreplaceable. New ideas emerge from spontaneous conversation, something the formal structure of video calls simply can’t replicate. His message to anyone thinking about contributing? Fedora needs far more than technical contributors. Documentation, communication, community building these are all vital, and Fedora needs to do a better job of making that visible. At Flock 2026, he is most looking forward to the working groups and the hallway conversations, the ones that are simply too nuanced to have any other way.

Flock to Fedora 2026 takes place June 14–16 in Prague. Registration is at capacity but you can join the waitlist. Can’t make it in person? Follow along live on the Fedora YouTube channel.We hope to see you there!

Note: AI (Google Gemini) was used in drafting this article. The content was reviewed and verified before publishing.

Many GNOME projects have adopted a policy banning all contributions generated by LLMs. This policy was originally developed by Sophie for Loupe, but is now used in many other notable places:

This project does not allow contributions generated by large languages models (LLMs) and chatbots. This ban includes, but is not limited to, tools like ChatGPT, Claude, Copilot, DeepSeek, and Devin AI. We are taking these steps as precaution due to the potential negative influence of AI generated content on quality, as well as likely copyright violations.

This ban of AI generated content applies to all parts of the projects, including, but not limited to, code, documentation, issues, and artworks. An exception applies for purely translating texts for issues and comments to English.

AI tools can be used to answer questions and find information. However, we encourage contributors to avoid them in favor of using existing documentation and our chats and forums. Since AI generated information is frequently misleading or false, we cannot supply support on anything referencing AI output.

I won’t attempt to argue that you should allow use of AI for writing code. If you wish to ban LLM-generated code, fine. That’s probably inadvisable, but I am not going to object.

But this policy is far stricter than that. Notably, it strictly prohibits AI-generated content in issue reports (except to translate text). Don’t do this! Prohibiting bug reports is stupid and just makes your software worse. Please make sure your project’s AI policy allows for at least AI-generated static analysis results and AI-generated vulnerability reports. Otherwise, you prohibit entirely unobjectionable problem reports.

It’s hard to imagine what could possibly be the value of prohibiting valid bug reports. AI-generated static analysis works well: the AI is able to think about your code, follow execution paths, and automatically discard most false positives to avoid bothering you with them, and the quality of reports is generally pretty high. They are far from perfect, but the same is true of humans.

Here is a typical example of an AI-generated static analysis finding:

2. Resource leak in update_credentials_cb on gnutls_credentials_set failure

File: tls/gnutls/gtlsconnection-gnutls.c:169-172

When gnutls_credentials_set() fails, the function returns without calling g_gnutls_certificate_credentials_unref(credentials). The credentials was either freshly allocated or ref-bumped, so it leaks.

Pasting this into an issue report clearly violates the ban on AI-generated content. And yet, why would you not want to receive a clear and concrete bug report for memory leak?

I understand not all maintainers are fond of AI, but is your dislike really so extreme that you would choose to ignore valid problems and intentionally make your software worse? If not, then your AI policy should thoughtfully consider how to handle AI-generated content in issue reports. Certainly do not adopt a policy that outright bans all AI-generated content in issue reports.

As an issue reporter, you could theoretically take the problem found by the AI and rephrase all the words, then claim that it is no longer AI-generated content because it is rewritten. This is a waste of time and usually results in a lower-quality, less-detailed result, but you could plausibly do that. Or, if you want to go above and beyond, you could just jump ahead to creating a merge request. But realistically, if your project does not allow any use of AI in issue reports, it’s more likely that either (a) you won’t receive the issue report in the first place, or (b) you won’t receive such issue reports from experienced developers who read and respect your policy, while users who do not read your policy will continue to submit them.

What about security vulnerability reports? Since the start of this year, I have reviewed well over 100 vulnerability reports that I strongly suspect were generated by AI. To reach the “over 100” claim, I sadly only considered vulnerability reports submitted during a particularly heavy four week period, so this is an extremely loose lower bound. Suffice to say, I have seen a lot of them. The quality varies dramatically. Vulnerability reports are now often better or worse than before: better because an experienced human working with a good AI is able to find vulnerabilities that would have surely gone unnoticed without AI, and worse because an inexperienced human with a bad AI might create some pretty terrible issue reports, a significant proportion of which are just outright spam. Low-quality reports remain a problem, but nowadays most AI-generated issue reports are quite good.

Maintainers do not need to tolerate spammy vulnerability reports. If an issue report is bad, of course go ahead and close it. If it’s really bad, then I sometimes don’t even bother replying. But banning good vulnerability reports solely because some portion of the report was generated by AI is unacceptable. AI-assisted vulnerability reports are the new industry standard, and this is not likely to change. Prohibiting issue reports reduces the quality and safety of your software, punishing your users. This is too extreme.

E.P. Unny is a notable Indian political cartoonist, who worked/works with famed Shankar’s Weekly and new papers such as The Hindu and Indian Express.

Since 2020, all his cartoons (also 2025, 2026 so far) are published — every week — open-access by Sayahna Foundation.

Unny was using a font based on his handwriting style for the cartoons, designed by K.H. Hussain of Rachana. Recently, a new font designed by Varshini KVSS & ‘Kandam Collective’ is developed by Rachana Institute of Typography to use in the cartoons, and it is released as open source — see the specimen and download links.

The character set of the font is Latin only. There are plenty of alternate glyphs (for upper case and lower cases of i, j, l, g, etc. — for instance check the double ‘l’ in ‘Intelligence’ on the specimen above). Such characters are rendered alternately to give a feel of the randomness that handwriting evokes.

The source (and issue tracking) are available at RIT fonts repository.

Across the project, a primary focus was on technical modernization and infrastructure migration. This was highlighted by the major mass rebuild for Python 3.15 in Rawhide, the Workstation team's plans to replace several core desktop components like gnome-keyring and dbus-daemon, and the continued migration of services and repositories to the new Fedora Forge platform. Alongside these technical upgrades, there was a strong emphasis on improving governance and community processes, demonstrated by the Council's work on a new "Fedora Innovation Lifecycle," the official release of new forum moderation guidelines, and discussions within the Docs and EPEL teams to refine workflows and policies. Much of this work was contextualized by the upcoming Flock conference, which influenced planning and was identified as a venue for key in-person discussions. Other common themes included the development of new offerings, such as a home server spin-off and a new graphical UI for DNF, and a consistent focus on community engagement through the ongoing Fedora elections and numerous calls for new package maintainers.

This week, the Fedora 44 elections voting period opened and will run until June 12th, with candidate interviews available for review (e.g., Vít Smolík, Tomáš Hrčka). For users of the forums, new self-moderation guidelines and rules have been released to improve transparency and formalize community standards. On the technical front, a major mass rebuild for Python 3.15 in Fedora 45 has begun and successfully concluded, and packagers can now safely build in Rawhide again. Contributors were also notified of a planned infrastructure outage for server updates. In news relevant to the broader community, a recent Fedora 43 upgrade helped uncover a 20-year-old security bug in Microsoft Outlook related to unencrypted connections.

In preparation for the upcoming Flock conference, the CommOps team launched the #Commit History campaign, inviting contributors to share their origin stories. Fedora Magazine continued its series of interviews with Flock speakers, featuring insights from Jef Spaleta, Valentin Rothberg, Aleksandra Fedorova, Akashdeep Dhar, and Jona Azizaj. Additionally, the weekly Community Update detailed progress across various teams, including work on Fedora Badges, RISC-V for F44, and QE bug fixes. A helpful guide on installing Fedora across two disks was also published.

In their weekly meeting, the Council discussed several key governance topics. They reviewed the draft for a new Fedora Innovation Lifecycle, deciding to formally introduce it after the Flock conference to allow for more community context-building. The group also approved the new moderation guidelines for the Fedora Discussion forum and initiated a broader conversation about improving the representation of community moderators within Fedora's governance structures. A consensus emerged that the current "Initiatives" framework is ineffective and should be replaced, with further discussion planned. Ongoing forum topics included a proposal for a Fedora AI Ecosystem and the eligibility of teens running for Council.

Learn more about the Council team.

The main discussion for the Mindshare group this week continued to focus on the future of surveys within Fedora, specifically the proposal to shut down the LimeSurvey service. The topic was originally raised due to the sole maintainer's burnout, difficult billing changes, and community dissatisfaction with the quality of recent surveys. This week's contribution supported dropping LimeSurvey, suggesting that the Data WG could instead leverage existing, repeatable data sources for analysis. One example was using event data from Pretix via the Fedora message bus to gather insights on attendance, rather than relying on a survey. The idea of a specialized SIG for survey design was also raised to better separate statistical data gathering from simple feedback collection.

Learn more about the Mindshare team.

This week, the Workstation Working Group laid out plans for several significant technical transitions. Key initiatives include replacing the gnome-keyring daemon with 007, removing the legacy dbus-daemon to standardize on dbus-broker, and modernizing testing infrastructure by replacing xvfb-run with wl-headless-run. These efforts aim to streamline the desktop stack and align with modern practices. Contributor opportunities include finding a new maintainer for the orphaned Showtime package and helping update packages to use the new wl-headless-run testing utility.

In forum discussions, a proposal to undertake a chain of updates related to the mozjs JavaScript engine for the Cinnamon DE in Fedora 44 was debated. The consensus was to prioritize stability for the current release, deferring the larger migration but updating the existing mozjs128 package to include the latest security fixes. Another topic explored the possibility of creating a new Fedora edition for upcoming NVIDIA RTX Spark hardware, with community sentiment leaning towards leveraging existing AArch64 builds and creating documentation rather than introducing a new Spin.

gnome-keyring daemon will be replaced with 007 in Fedora Workstation, with work being managed to ensure a smooth transition.dbus-daemon package by addressing dependencies that pull it in, such as in the Anaconda installer.xvfb-run utility will be incrementally replaced with wl-headless-run for testing; this will be added to the package maintenance checklist.mozjs128 package but not to perform a larger migration to mozjs140 to avoid destabilizing a released version of Fedora.libcanberra dependency from core desktop packages by rerouting sounds directly to PipeWire.Learn more about the Workstation / GNOME team.

The Server team held its weekly meeting with a strong focus on the development of a new home server spin-off. The main discussion revolved around user-friendly management and the initial software selection. The group reviewed a draft management guide, which led to a conversation about security practices for home use, including SSH key usage, firewall configuration, and remote access strategies. There was a consensus to leverage Ansible for configuration, with a plan to provide pre-configured playbooks to simplify setup for end-users. Using Cockpit for remote management was also highlighted as a secure and accessible option. The team also discussed which applications should be included by default, agreeing to start with a minimal set from the official Fedora repositories.

Learn more about the Server team.

This week, the Infrastructure team's main operational focus was a planned mass update and reboot outage to apply the latest updates across servers. Significant progress was also made on the migration from Nagios to Zabbix for monitoring; the team is finalizing per-team notifications and is nearing the project's completion, with plans to announce the final switchover soon. Regular administrative tasks included reviewing the monthly AWS usage report and addressing untagged AWS resources.

A key topic of discussion was the future of the now-defunct scm-commits mailing list. A new forum thread was created to gather feedback from the community, especially packagers, on potential replacements. The primary options being considered are a public-inbox service, for which a proof-of-concept is already running, and leveraging the new Fedora Data Working Group (FDWG) platform for analysis and querying of commit data. Contributors are encouraged to join the discussion and share their use cases to help guide the final implementation. Other topics in the weekly and daily meetings included ticket triage and planning around upcoming events like Flock and a Red Hat Day of Learning, which may impact team availability.

Learn more about the Infrastructure team.

The Release Engineering team's weekly meeting focused on the ongoing migration to the new Forgejo instance. A significant point of discussion was the migration of the fedora-scm-requests repository, which is blocked on updates to the fedpkg tool. This change will require a broad rollout to all package maintainers and is not expected to be completed before Flock. The team also explored opportunities for automation, identifying the release End-of-Life (EOL) process and mass branching as prime candidates for future work. Additionally, a scheduled task related to retiring packages with security issues was reviewed; it was determined that the underlying policy was never fully implemented, and the team decided to request its removal from the schedule.

compose-tracker project.Learn more about the Release Engineering team.

This week, the main highlight was a community discussion around a new tool, "Dnf-ui is ready for testing", a modern GTK4 graphical frontend for DNF5. The developer is actively seeking feedback on installation, usability, and functionality, providing a great opportunity for contributors to get involved. The discussion has already yielded valuable feedback on performance, transaction handling, and testing on Fedora Silverblue via Distrobox.

Routine activities for the Quality team continued with announcements for Rawhide nightly compose testing and the upcoming weekly meeting. On the mailing lists, discussions included a user report about a missing grub2 menu after kernel updates on Rawhide and a question about the process for closing bugs for Fedora 42, which has now reached its end of life.

Learn more about the Quality team.

The main discussion this week centered on the ongoing effort to create a prototype for a modernized Fedora Media Writer. The original proposer acknowledged the community's continued interest and praised a functional prototype that another contributor developed based on the initial designs. However, the initiative has been temporarily put on hold due to other commitments and the need to investigate the existing codebase to avoid a complete rewrite. The conversation also included ideas about a web-based writer, referencing the Chromebook Recovery Utility as an example, and suggested that contributors could help by exploring the codebases of other popular tools like Rufus and Etcher for inspiration.

Learn more about the Design team.

The Docs team held their bi-weekly meeting to discuss several housekeeping and process-related topics. A major organizational change was the standardization of issue labels across all Docs repositories and the decision to consolidate project boards at the organization level to improve cross-repository tracking. The team also discussed how to handle outdated content, including an unsupported system upgrade path in Quick Docs and the ongoing effort to retire old Docs pages on the Fedora Wiki. A debate on contribution workflows took place regarding whether to enforce creating pull requests from forks, with the discussion set to continue in the ticket. Help is needed from the community to apply the new standardized labels to issues in the Quick Docs repository.

On the forums, a new discussion began about "AEO" (AI Engine Optimization) and improving the discoverability of Fedora's documentation for newcomers and automated tools. The conversation highlighted the need for better-organized menus and strategic linking to guide new contributors. In an ongoing topic, the new "Fedora Discussion Forum (Self-)Moderation Guidelines and Rules" were officially published on the docs website, replacing the obsolete Ask Fedora SOPs, and have now been added to Weblate for translation. Finally, a discussion about the legality of including NVIDIA driver installation instructions continued, with a user pointing out that Quick Docs already links to RPM Fusion, questioning the consistency of the policy.

Learn more about the Docs team.

A discussion was initiated this week regarding the licensing of timidity code, which is bundled within the SDL3_mixer package currently being prepared for Fedora. The timidity README offers a choice between "the GNU GPL, the GNU LGPL, or the Perl Artistic License" without specifying versions, creating ambiguity for packaging. The conversation explored how to interpret this statement, considering the code's history predating GPLv3. The consensus was to assume the author intended versions available at the time, leading to an acceptable license combination for Fedora: GPL-2.0-or-later OR LGPL-2.0-or-later OR Artistic-1.0-Perl.

Learn more about the Legal team.

This week, the COPR team announced and performed a planned maintenance outage on June 2nd to update the copr-backend server. The outage lasted approximately one hour, during which build queue processing was stopped. Existing DNF packages and repositories remained available throughout the maintenance period. The update was completed successfully.

Learn more about the COPR team.

During the weekly EPEL meeting, the main topic was a proposal to delay the retirement of EPEL minor versions. Currently, a minor version is retired on the same day a new RHEL minor version is released, which can surprise maintainers. A two-week delay was proposed to allow in-flight updates to reach the stable repository, but the committee decided to table the discussion to gather more feedback. The results of the Spring 2026 EPEL Steering Committee elections were also announced, with Diego Herrera joining as a new member and Carl George, Troy Dawson, and Jonathan Wright returning. Kevin Fenzi was thanked for 19 years of service as he retires from the committee. On the development mailing list, a discussion about updating OpenColorIO for EPEL 9 highlighted the challenges and general reluctance to introduce updates with library version bumps in stable EPEL releases.

Learn more about the EPEL team.

The CentOS Hyperscale SIG held its weekly meeting to discuss kernel updates, transactional systems, and tooling. After delays due to security work, new kernel updates for CentOS Stream 9 and 10 are planned for the upcoming week. The group discussed the possibility of shipping an LTS kernel but concluded against it for now, citing the benefits of tracking the Fedora kernel and the viability of using the stock kernel with modules from the Kmods SIG. Significant progress has been made on a dnf5 plugin for transactional updates, thanks to community contributions for Kalpa Desktop, which brings the goal of a transactional Hyperscale variant closer. Contributors interested in having specific packages from Fedora tracked and updated can reach out to the SIG.

On the tooling front, the hs-relmon tool now features a new interactive review mode to simplify package promotion, which was used to release wprof 0.4. In community news, Paweł Zmarzłowski announced his resignation from the SIG. The team also noted that their activity report is overdue and they plan to finalize and publish it soon. Finally, a cleanup of stale packages was performed to reduce repository metadata size, and dnsmasq was backported to the el9 branch to address a security vulnerability.

Learn more about the CentOS Hyperscale team.

The ELN SIG held one meeting this week, providing status updates on ELNBuildSync (EBS) and bootc. The EBS service has undergone a significant reorganization: its source code has been migrated to a new GitHub repository, its deployment process has been simplified, and a pull request has been submitted to host it within the Fedora Infrastructure OpenShift cluster.

There was also a discussion on the progress of creating bootc images for ELN. This effort is a prerequisite for producing bootc images for CentOS Stream 11. The current work is focused on creating the necessary compose image, with further technical discussions planned for a follow-up meeting.

Learn more about the ELN team.

The Atomic group's main focus this week was the migration to the new Fedora Forge atomic organization, discussed during their weekly meeting. The team decided to begin by migrating repositories that do not depend on Konflux, such as documentation and issue trackers. Repositories requiring Konflux for CI/builds will be moved once the integration is unblocked. The discussion also confirmed that the new organization will be the home for bootc-related projects, including work on ELN and a potential move of the CentOS Stream bootc repository. Contributor opportunities include helping with documentation fixes and issue triage after the initial migration, with a cleanup session planned for a future video call.

In community discussions, new solutions were shared for technical challenges on immutable systems. A user provided an updated guide for running the Guix package manager on Fedora Silverblue, and another user shared their method for integrating the KeePassXC and Firefox Flatpaks.

atomic organization on Fedora Forge.bootc ELN work and the CentOS Stream bootc container repository are considered in scope for the new atomic organization.Learn more about the Atomic team.

In this week's CoreOS meeting, the team discussed the upcoming Fedora 45 release cycle and progress on build system modernization. A key topic was the effort to switch CoreOS Assembler (COSA) to run on Fedora 44, with a plan to test the removal of a potentially unneeded dependency to move forward. Following successful work to repair the bootc pipeline, the team decided to re-enable Konflux for building the Rawhide stream. The group also planned the next steps for adopting bootc-image-builder, which includes moving partition layout definitions into the OCI container and staging the rollout across different release streams, starting with Rawhide.

Learn more about the CoreOS team.

During the weekly AI & ML meeting, the group discussed significant packaging updates, including a new version of ollama in rawhide and progress on updating onnxruntime, which is unblocked by a recent protobuf update. There is a call for community help to review several new packages, including the pi-coding-agent and emacs-agent-shell. In a related forum discussion on NPU support, it was noted that an xrt runtime package is now awaiting review. The meeting also addressed the "Fedora AI Developer Desktop" proposal, with a consensus that the SIG should serve as a collaborative space to shape such ideas before wider public announcement. This led to a re-commitment to create better "Getting Involved" documentation to improve the SIG's visibility and accessibility.

editorial-guide-ramalama, will be created under the ai-ml forge namespace for an Outreachy internship project.Learn more about the AI & ML team.

This week, the Security SIG held its weekly meeting, where the main topic of discussion was user privacy in default applications. Sparked by concerns around Firefox, the group considered creating a Fedora-wide policy to disable telemetry and AI features by default, ensuring users must explicitly opt-in. Given the sensitive nature of the topic, the consensus was to continue this conversation in person at the upcoming Flock conference.

A significant opportunity for contributor engagement was announced on the forums with the publication of a new static analysis report on Fedora 45 Critical Path Packages. The report identified 1,242 new findings since the last release, with an AI analysis flagging 14 important and 12 moderate-impact issues that may have security implications. Package maintainers and other contributors are encouraged to review these findings to help secure Fedora's core components.

Learn more about the Security team.

The DotNET SIG welcomed a new potential contributor, Anugerah Tallenta Agung, who introduced themselves on the mailing list. As a university student and long-time Fedora user, they expressed interest in contributing to the .NET and gaming experience on Fedora. They are seeking guidance on how to get involved, specifically mentioning sharing their experiences and potentially helping with packaging. This provides an excellent opportunity for the SIG to engage with and mentor a new member. No technical discussions or decisions took place this week.

Learn more about the DotNET team.

This week in the Go SIG, the weekly meeting was lightly attended, leading to the postponement of discussions on open tracker issues. The primary point of information was the announcement of the public Fedora 45 Change proposal to introduce Golang 1.27. This proposal outlines the standard update of the Go toolchain for the next Fedora release.

A discussion on the mailing list resolved a packaging issue where spectool failed to download a source tarball. The problem was identified as a mismatch between the %{gosource} macro's generated URL and the upstream git tag, which included a "v" prefix. The solution was to remove a custom %global tag definition from the spec file, allowing the macro to use its default, correct behavior.

Learn more about the Go team.

The main point of discussion this week was a call for new maintainers for a large number of orphaned Perl packages. Due to the inactivity of a previous maintainer, there is a risk of these packages being retired. This presents a significant opportunity for contributors to step up and take ownership of packages they are interested in. Other activity consisted of routine package maintenance, including several version updates and a bug fix.

perl-WWW-RobotRules package was updated to version 6.03.perl-Net-CalDAVTalk package was updated to version 0.17.perl-Net-CardDAVTalk package was updated to version 0.11.procps-ng usage was merged for perl-IO-Socket-SSL.Learn more about the Perl team.

The Python team announced and executed the mass rebuild of packages for Python 3.15 in Fedora 45 (Rawhide). The process was conducted in a dedicated side tag to minimize disruption, starting on June 3rd. Maintainers were advised to pause their builds in the main Rawhide branch during this period. The side tag was successfully merged on June 6th, completing the transition.

With Python 3.15 now the default in Rawhide, the focus shifts to resolving any remaining build failures. Maintainers are encouraged to fix their packages, with guidance provided for handling common issues like test failures or broken dependencies. This is a key opportunity for contributors to help ensure their packages are compatible with the latest Python version.

Learn more about the Python team.

ouch compression utility, community members explained that Fedora packaging is volunteer-driven. The requester highlighted the tool's memory safety as a key advantage over existing tools like unar and 7z. While there was interest in the tool, no one committed to packaging it.URL or VCS RPM tags. The VCS tag was favored as a more suitable candidate, though concerns were raised about the significant effort required for a mass update and the need for a clear benefit to package maintainers.fedora-comps repository has been migrated from Pagure to Fedora Forge. The repository, which maintains the XML files for installer groups and dnf metadata, is now located at https://forge.fedoraproject.org/releng/fedora-comps.fedpkg, a clarification that the RPM 6.1 Change Proposal does not include a switch to the v6 package format, a report on static analysis findings in Critical Path packages which sparked a debate on responsible disclosure, and a reminder about the sunset of pagure.io clarifying its scope.dnfdaemon, which is based on dnf4, as all its consumers have migrated to dnf5daemon-server.snakemake and a large number of its related Python packages. The decision was made to free up time, citing the high total maintenance effort and long-term concerns about bundled pre-compiled assets in the main package.libyui stack of packages, including libyui-gtk, libyui-mga, and others, as they are no longer used by any packages in Fedora.qmc2 package because its upstream project is no longer maintained.gap package to version 4.16.0, which will include a SONAME bump for libgap. This change is not expected to impact any other Fedora packages.girara, zathura, and mupdf would be updated, involving SONAME bumps. The updates, which include important bug fixes, will be built in side-tags for both Rawhide and stable releases under FESCo exceptions.kdsingleapplication in Rawhide, which included a SONAME bump. All affected dependent packages were subsequently rebuilt in a side tag.intel-cmt-cat package. It was clarified that as a previously inactive packager, he needed to follow the process for returning contributors to be re-added to the packager group.libpst, requested sponsorship to package passwordsafe. Dominik 'Rathann' Mierzejewski sponsored him, welcoming him back into the packager group.

Flock to Fedora is more than a conference – it’s where the Fedora community comes alive. As part of the CommitHistory campaign, we sat down with confirmed Flock 2026 speakers to hear their stories: what brought them to Fedora, what Flock means to them personally, and what they’re hoping for in Prague this June. This is one of those conversations.

Jona Azizaj’s first Flock was ten years ago in Kraków, Poland. What struck her most was how approachable everyone was. In a community full of experienced contributors, people made space for new voices, listened to her experiences building the local community in Albania, and made her feel like her perspective genuinely mattered. Those small moments, she says, are what made her feel like she truly belonged.

A decade on, Jona sees Flock as one of the most powerful tools for growing the next generation of Fedora contributors. Online mentorship happens asynchronously and at a distance. Flock, however, creates something different: the chance to sit down with someone, share experiences, and build real trust. Flock is where contributors grow more confident, find their place, and realise that open source is about far more than technical work.

For Flock 2026, Jona and the Fedora Mentor Summit team are bringing three initiatives, now in their 5th edition.

A successful Flock, for Jona, is one where people leave feeling more confident than when they arrived. It is an event where the connections built there carry on long after the event ends.

Flock to Fedora 2026 takes place June 14–16 in Prague. Registration is at capacity but you can join the waitlist. Can’t make it in person? Follow along live on the Fedora YouTube channel.We hope to see you there!

Note: AI (Google Gemini) was used in drafting this article. The content was reviewed and verified before publishing.

I have a bunch of different ARM SBCs, some Raspberry Pis, some Rockchip based, and some others. Some of them I have in 1U rack mount cases. Some in cases that I have 3d printed. They have all had a common issue. When something goes wrong, I need to unplug them, move them to my desk, and connect them to my desktop via a USB to tty adaptor. Aside from being inconvenient, it also meant I had to be home to debug what was happening.

I figured there had to be a better way. In the end, I used Claude to help me write a solution. What I came up with is a project to make an ESP32 Web Terminal. Using an ESP32 wired to the UART on the SBCs, I can securely access the serial console of my devices. I have used a couple of different ESP32 devices, from a USD$3 ESP32 C3 Mini to a USD$8 XIAO ESP32S3. all of which work well. For the Raspberry Pis, I purchased some premade JST SH1.0 mm 3 Pin Wire connector cables to plug into the uart port, and for other devices, I made some custom cables with 2.54 mm Dupont crimp pin connectors.

I have most of my SBC’s powered by PoE. In the pictured example, I soldered some headers onto the PCB for the PoE hat to use to provide a 5V power source to the ESP32, and it all runs self-contained in the rack-mount unit. I do want to work on making the connections a little neater and the housing better. But for now, this is functional. While the software on the ESP allows resetting the SBC and controlling the power, I do not currently have that wired up.

As for provisioning the ESPs, I run FreeIPA at home for authentication, and I have dogtag set up as a CA. When I have wired up and flashed a new board, it initially runs as an access point I connect to in order to set up my home network. Once it is connected to my network, I run an Ansible playbook to create and upload SSL certificates signed by my CA and change the admin password. That way, I can connect without any SSL warnings and use a known non-default password to log in. So far, I am quite happy with how they have performed. I still have some things I want to make better, and I also want to finish testing a setup where I connect to an SBC that exposes its serial port over USB. In theory, it should work with ESP32S3, I just need to test.

While it has added quite a few more devices to my network, it is useful to be able to access and debug what is happening without having to move devices and plug them into another computer. I have considered options for externally powering the ESPs and the best ways to connect the ESPs to the SBCs. I would like to at least be more robust with a 3d printed enclosure for the ESP. Each unit has cost me between USD$5 and USD$12, and each has acceptable performance. I have intentionally stuck to using small ESP’s, though it would work well with bigger devices also. Powering each through a shared power source would require additional circuitry to isolate them and prevent voltage leaks.

Another busy week for me. Lots of little things all over the place.

We got everything updated and rebooted and cleaned up any messes from that (at least as far as I know). We did firmware updates on servers this time, and those always cause things to take much longer. Instead of a 'quick' 5m reboot of a server, it's more 20-25m to apply all the firmware updates and reboot a bunch of times. Ah well, it's good to be up to date.

We did have one arm server fail to come up after reboot. ;( It has a memory error, so we are having datacenter folks reseat all the memory (this happened once before and that cleared it up).

I was able to try and move some old long running tickets over the finish line this week:

systemd-boot signing. This is all setup, but needs to be tweaked in the package and tested. Note that this is a self signed cert, but it will still hopefully help folks using systemd-boot.

Looked over a bunch of work from Stephen Gallagher to fix some dist-git repos that have commits that break git fsck. I hope I can finish this up early next week.

Got the drm-panic 'application' deployed. (I just deployed, the change owner did all the heavy lifting).

Setup a pagure-stg-ro01 machine to test a 'readonly' pagure instance.

Got koji upgraded to 1.36.0.

Got all koji builders, hubs and kojipkgs moved to fedora 44

Moved all our proxies to fedora 44

Moved all our compose hosts to fedora 44

Fixed some openshift apps that were still pulling from docker hub (and getting rate limited). I even moved greenwave to using a hummingbird memcached container. Works great!

Flock is coming up fast now. I will be traveling to it starting next thursday. So expect me to be largely offline thursday and friday, and then only sporadically online while I am at flock.

Really looking forward to meeting up with folks and getting that good flock infusion of energy.

As always, comment on the fediverse: https://fosstodon.org/@nirik/116704516544974008

Flock to Fedora is more than a conference – it’s where the Fedora community comes alive. As part of the In the Commit History campaign, we sat down with confirmed Flock 2026 speakers to hear their stories: what brought them to Fedora, what Flock means to them personally, and what they’re hoping for in Prague this June. This is one of those conversations.

Akashdeep’s history with Flock goes back around five years, and his perspective on it has evolved significantly. During his time on the Fedora Council, he participated in the grueling process of reviewing over 150 talk proposals in a single cycle. This task was made harder by the fact that acceptance is often tied to sponsored travel meaning funding rejection can mean a contributor simply can’t attend at all.

But beyond the sessions and schedules, Akashdeep is emphatic about what Flock is really for. Roughly 75% of the experience is about human connection; understanding the person behind the screen, building friendships, and embodying the “friends foundation” philosophy at the heart of Fedora. Technical work is the bonus, not the point.

Nowhere is this clearer than in the story of the Fedora Badges revamp. Interest in rebuilding the platform which, despite its 2003-era interface, plays a vital role in motivating new contributors, dates back to 2019. But it was Flock’s hallway conversations and dedicated workshops that finally built the consensus needed to move the project forward.

Akashdeep also wants people to know that contributing to Fedora infrastructure is more accessible than it looks. You don’t need to be on a specific team or work for a particular company. Just join a chat, introduce yourself, and find your corner. As one contributor discovered, starting with documentation led to a whole journey into diversity and inclusion work. Community bonding is what keeps people, and the technical work is the reward.

Flock to Fedora 2026 takes place June 14–16 in Prague. Registration is at capacity but you can join the waitlist. Can’t make it in person? Follow along live on the Fedora YouTube channel.We hope to see you there!

Note: AI (Google Gemini) was used in drafting this article. The content was reviewed and verified before publishing.

This is a report created by CLE Team, which is a team containing community members working in various Fedora groups for example Infrastructure, Release Engineering, Quality etc. This team is also moving forward some initiatives inside Fedora project.

Week: 01 – 05 June 2026

This team is taking care of day to day business regarding Fedora Infrastructure.

It’s responsible for services running in Fedora infrastructure.

Ticket tracker

This team is taking care of day to day business regarding CentOS Infrastructure and CentOS Stream Infrastructure.

It’s responsible for services running in CentOS Infrastructure and CentOS Stream.

CentOS ticket tracker

CentOS Stream ticket tracker

This is the summary of the work done regarding the RISC-V architecture in Fedora.

This is the summary of the work done regarding AI in Fedora.

This team is taking care of quality of Fedora. Maintaining CI, organizing test days

and keeping an eye on overall quality of Fedora releases.

This team is working on introduction of https://forge.fedoraproject.org to Fedora

and migration of repositories from pagure.io.

This team is working on keeping Epel running and helping package things.

This team is working on improving User experience. Providing artwork, user experience,

usability, and general design services to the Fedora project

If you have any questions or feedback, please respond to this report or contact us on #admin:fedoraproject.org channel on matrix.

The post Community Update – Week 23 2026 appeared first on Fedora Community Blog.

Release Candidate versions are available in the testing repository for Fedora and Enterprise Linux (RHEL / CentOS / Alma / Rocky and other clones) to allow more people to test them. They are available as Software Collections, for parallel installation, the perfect solution for such tests, and as base packages.

RPMs of PHP version 8.5.7RC1 are available

RPMs of PHP version 8.4.22RC1 are available

ℹ️ The packages are available for x86_64 and aarch64.

ℹ️ PHP version 8.3 is now in security mode only, so no more RC will be released.

ℹ️ Installation: follow the wizard instructions.

ℹ️ Announcements:

Parallel installation of version 8.5 as Software Collection:

yum --enablerepo=remi-test install php85

Parallel installation of version 8.4 as Software Collection:

yum --enablerepo=remi-test install php84

Update of system version 8.5:

dnf module switch-to php:remi-8.5 dnf --enablerepo=remi-modular-test update php\*

Update of system version 8.4:

dnf module switch-to php:remi-8.4 dnf --enablerepo=remi-modular-test update php\*

ℹ️ Notice:

Software Collections (php84, php85)

Base packages (php)

RPMs of PHP version 8.5.7 are available in the remi-modular repository for Fedora ≥ 42 and Enterprise Linux ≥ 8 (RHEL, Alma, CentOS, Rocky...).

RPMs of PHP version 8.4.22 are available in the remi-modular repository for Fedora ≥ 42 and Enterprise Linux ≥ 8 (RHEL, Alma, CentOS, Rocky...).

ℹ️ These versions are also available as Software Collections in the remi-safe repository.

ℹ️ The packages are available for x86_64 and aarch64.

ℹ️ There is no security fix this month, so no update for versions 8.2.31 and 8.3.31.

Version announcements:

ℹ️ Installation: Use the Configuration Wizard and choose your version and installation mode.

Replacement of default PHP by version 8.5 installation (simplest):

On Enterprise Linux (dnf 4)

dnf module switch-to php:remi-8.5/common

On Fedora (dnf 5)

dnf module reset php dnf module enable php:remi-8.5 dnf update

Parallel installation of version 8.5 as Software Collection

yum install php85

Replacement of default PHP by version 8.4 installation (simplest):

On Enterprise Linux (dnf 4)

dnf module switch-to php:remi-8.4/common

On Fedora (dnf 5)

dnf module reset php dnf module enable php:remi-8.4 dnf update

Parallel installation of version 8.4 as Software Collection

yum install php84

And soon in the official updates:

⚠️ To be noticed :

ℹ️ Information:

Base packages (php)

Software Collections (php83 / php84 / php85)

You attended Flock 2026, the Fedora contributor conference, in Prague, Czech Republic

You attended Flock 2026, the Fedora contributor conference, in Prague, Czech Republic

Caveat lector

This post discusses tools reluctantly written with AI assistance. If you don’t entertain using them under any circumstance, and think even reading about them legally compromise your ability to reimplement them yourselves, stop reading now

Happy Friday! Of course it’s not Friday anymore in Asia, and if I finish this post in time, it’s still the work day in the Western Hemisphere.

When you work in a large distributed project, it’s not dissimilar to working in a large multinational company - there are too many people to know everyone (or at least not at once!) and you might not know where someone is and if you’re pinging them in the middle of the night.

Na novela USTR versus o nosso PIX do Brasil, vejo muito mais a mão do lobby da Apple e Meta do que Visa e Mastercard.

As bandeiras de cartão de crédito se beneficiaram muitíssimo da enorme democratização bancária que aconteceu no Brasil nos últimos anos. Não creio que são essas as empresas americanas que fizeram lobby para as sanções do USTR.

Já a Apple não permite a integração do Pix em seu Apple Pay se a transação (banco do usuário pagador) não lhe pagar um troco. Coisa que é impossível com o Pix. Apple Pay com Pix permitiria eliminar o QR com a app do banco, e o pagamento aconteceria por mera aproximação. Esse fator (ou a falta dele) faz com que usuários de iPhone continuem preferindo pagar com crédito e débito porque é mais rápido, natural e conveniente. Mas se o comerciante oferecer desconto se pagar com Pix, a história muda.

A Meta, sendo dona do aplicativo mais peer-to-peer do mundo — o WhatsApp —, é natural que integre pagamentos ali mesmo e ganhe um troco com essa intermediação. Só que o Pix é mais peer-to-peer ainda, e mais democrático por ser direto, sem intermediários (o Banco Central não é um intermediário, só um integrador de dados) e gratuito para o pagador e para o credor/comerciante. Isso faz com que o WhatsApp Payments seja um serviço insustentável e irrelevante no Brasil.

Lamento muito para eles, parabéns para nós brasileiros.

Again a few good podcasts, I especially liked the one about The Pre-Training Wall and the Mythical Man Month.

The Ask - what is this meeting actually about?

Fedora Infrastructure team will be applying updates to servers and rebooting them.

Many services will be affected, most should only be down for a short time as their particular resources are rebooted HOWEVER some may be down for a non-trivial amount of time due to RHEL-9 to RHEL-10 upgrades.

In …

OpenSSL 4.0 was released just over a month ago. So, how is its support progressing in syslog-ng? Well, Git master already supports it, and the patch is easy to backport to earlier releases. At the same time, version 4.12 will support OpenSSL 4.0 out of the box.

A month ago, someone from Gentoo Linux reached out to the syslog-ng team about OpenSSL 4.0 support. I asked the community about their expectations, knowing that version 4.0 is not an LTS version. However, I quickly learned that all major distros were preparing to use it in their rolling development versions. A few days later, we also received hints on how to add support for it in syslog-ng. And thus, a PR was born, which is now merged into syslog-ng git master.

What does this mean for you?

Originally published at https://www.syslog-ng.com/community/b/blog/posts/the-status-of-openssl-4-0-support-in-syslog-ng

Flock to Fedora is more than a conference — it’s where the Fedora community comes alive. As part of the #In the CommitHistory campaign, we sat down with confirmed Flock 2026 speakers to hear their stories: what brought them to Fedora, what Flock means to them personally, and what they’re hoping for in Prague this June. This is one of those conversations.

Aleksandra Fedorova’s journey into Fedora started with a sticker. At LinuxTag in Berlin, her first properly organised Linux community event she approached the Fedora booth simply wanting a sticker. What happened next changed everything. The people behind the booth invited her to join them on their side of the table. That single gesture dismantled the wall between user and contributor, and she never looked back.

For Aleksandra, Flock isn’t the place for deep technical work. Instead, it’s where the Fedora Council reads the room, sensing priorities, spotting coordination gaps, and picking up on tensions before they become real problems. She’s also refreshingly honest about Flock’s limitations: the costs of attending mean it’s not always a fully representative cross-section of the community, and understanding the broader Fedora ecosystem requires deliberate effort beyond the event itself.

But what Flock offers that nothing else can? The human element. No mailing list or Matrix channel lets you simply walk up to someone and start a conversation without a formal introduction. At Flock, the hallway is as valuable as the schedule. For Flock 2026, Aleksandra hopes the event helps ease current tensions; the reminder that everyone is working toward the same goal, even when they disagree on how to get there, is something only being in the same room together can provide.

Flock to Fedora 2026 takes place June 14–16 in Prague. Registration is at capacity but you can join the waitlist. Can’t make it in person? Follow along live on the Fedora YouTube channel.We hope to see you there!

Note: AI (Google Gemini) was used in drafting this article. The content was reviewed and verified before publishing.

Yes, the Fedora 43 upgrade brought an interesting revelation for all Outlook users—one that Microsoft is unlikely to be thrilled about. Outlook was not encrypting email connections, even though SSL/TLS was clearly enabled in the account settings. It looks like, that bug dates back to at least Outlook 2007, which is the oldest Outlook version I was informed about.

Every six months, Fedora Servers require and upgrade to the next release version, as you all know  This May we had to upgrade from 42 to 43 and in this upgrade, Dovecot POP/IMAP server switched to version 2.4.3. Dovecot did us all an unexpected favor, because it required a full rewrite of the used service config, because it’s not backwards compatible. This change introduced a new paradigm: PLAIN TEXT passwords are no longer allowed over unencrypted connections.

This May we had to upgrade from 42 to 43 and in this upgrade, Dovecot POP/IMAP server switched to version 2.4.3. Dovecot did us all an unexpected favor, because it required a full rewrite of the used service config, because it’s not backwards compatible. This change introduced a new paradigm: PLAIN TEXT passwords are no longer allowed over unencrypted connections.

This is a major break with the oldest RFCs (i.e. RFC 1081) regarding POP3 behavior, but a good one IMHO. No one should still use unencrypted connections to any form of service on the internet when we have easy to use encryption protocols like STARTTLS (STLS) at hand in any major client.

After the upgrade, “we” (admins & customers) did not even know about the now broken auth-mechanism. This came a day later when customers started to call the support line about rquesters popping up for them to enter their passwords again. This is a normal behavior if auth fails… and it failed hard

As all admins know, such upgrades will result in higher amounts of support calls. To my surprise it was all Outlook clients that called. The oldest version so far was Outlook 2007. We even had an old MACOS Outlook :-). They all had in common, that the mailbox prefs had “SSL/TLS” enabled, but used Port 110, which is the old cleartext port for POP3, where port 995 is the correct SSL port. A normal mailclient would change the port number to 995 as soon as you enable SSL/TLS encryption. This is because you can’t “speak” SSL on a non-ssl port, except if you choose STARTTLS. This starts as a cleartext connection, but upgrades itself to ssl-encrypted later.

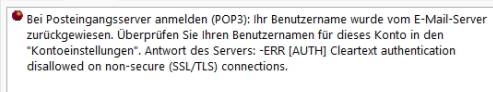

Outlook did the worst move you can take as a security enhanced app. It silently ignored the choosen SSL option and used the unencrypted port 110 without any notice to the user. After our server upgrade, the following message popped up:

“-ERR [AUTH] Cleartext authentication disallowed on non -secure ( SSL/TLS ) connections.“ popped up if you tried to open your inbox. The server logs revealed it clearly: the user used a non-secure connection and got this message correctly. This never got noticed since the EU GDRP only states, that corporations and organisations need to protect their data via a transport encryption like TLS. Normal persons don’t need to do so.

Even some of the notable folks of Fedora did not use encryption, which I personally advise to change immediately. Having this in mind, who are we to judge if you encrypt your connection or not?

You can easily check if TLS encryption is working. Send yourself an mail and open the mail headers, you will find lines like this:

Received: from bastion01.fedoraproject.org ([38.145.32.11] helo=bastion.fedoraproject.org)

by s113.resellerdesktop.de with esmtps (TLS1.3) tls TLS_AES_256_GCM_SHA384

(Exim 4.99.2)

(envelope-from <updates@fedoraproject.org>)

…

Any good MTA ( Exim, Postfix, etc. ) will note if the connection was encrypted or not.

If you don’t see an encryption notice, you can use this command:

tcpdump -A -n -n port 110 or port 143

in a root terminal and see if the unencrypted port is used for transport. If so, if it’s cleartext or if it’s using STLS.

So… THANKS Fedora 43 and Dovecot 2.4 … you revealed a 20 year old security bug in Outlook \o/

Disclaimer: It is possible that MS patched the Outlook UI in the past in a way that only old accounts are affected by this major fail. As Fedora users we had no Outlook available to test this

This is a part of the Fedora Linux 44 Mindshare Elections Interviews series. Voting is open to all Fedora contributors. The voting period starts Monday, June 1st and closes promptly at 23:59:59 UTC on Friday, June 12th 2026.

#council:fedoraproject.org)#mindshare:fedoraproject.org)#fedora-forgejo:fedoraproject.org)#admin:fedoraproject.org)#badges:fedoraproject.org)#apps:fedoraproject.org)#join:fedoraproject.org)#mentoring:fedoraproject.org)Since the past year, I have been actively contributing to the event planning [1] [2] and proposal curation in the Mindshare Committee. Representing our vested interests in the Fedora Council, I have paved the way for improvements in the regional event support (i.e., event owners and regional inventory), digital ambassadorship (i.e., swagpack designs and social media) and recognition service (i.e., Community Metrics and Fedora Badges).

Besides championing our community presence in underrepresented regions (e.g., in DevConf.IN 2026 and FOSSAsia 2026 in APAC), I have continued staying at the forefront of the Fedora Forge community initiative, working as an infrastructure architect for Fedora Infrastructure applications, organizing Fedora Mentor Summit during Flock events and mentoring budding contributors formally/informally in the community.

Technology might be why I joined the Fedora Project, but I stayed because of the people who made me feel at home. Progressing in various aspects of our planned functions over the past years has taught me one crucial thing – contributors are actually retained when they feel noticed, supported and trusted with meaningful work. The Mindshare Committee is where that happens, and I want to keep building what we started.

Furthermore, as the committee’s representative to the Fedora Council, I have seen firsthand how decisions at the governance level shape the contributor experience on the grassroots level. The update during the Fedora Council Strategy Summit 2026 gave us an opportunity to serve event organizers, community ambassadors and voluntary contributors better, all while using these outcomes to enhance the community health.

Simple. We need to show up where the community is. A community booth in one event, some interactive workshops in another – to begin with in regional event support to ultimately show people that we indeed care. While making sure that our infographic swagpacks are updated on a regular basis in digital ambassadorship, we actually ensure that we are providing people with reasons to come back to us when the time is right.

With the upcoming collectible rarity feature in Fedora Badges and tangible contributor recognition awards (both as a part of Fedora Mentor Summit event and separately), we nudge people to more opportune contribution avenues while avoiding burnouts in longtime contributors. This could further be extended to our Fedora Linux Release Parties too, as ultimately, most of our community members started off as its users.

Contributor Recognition. With over half a decade of experience in this space, the “What now?” problem (after the first contribution) has only become worse with the advent of AI Assisted Contribution Activities. Newcomers struggle to realize the value proposition of the community connection that Fedora Project could provide, and hence, it has become the need of the hour to incentivize contributions using rewarding activities.

But apart from that, we also need to do better at further improving our local presence in underrepresented regions. In this past term, my pilot experiment on APAC events worked wonders, and it showed us all the community power we can tap into by just being there. With reusable swagpacks, localized printing, active conversations and documented accounts, this limited experiment can scale well across various parts of the globe.

This article was originally posted on Fedora Commblog on 29 May 2026.

This is a part of the Fedora Linux 44 Council Elections Interviews series. Voting is open to all Fedora contributors. The voting period starts Monday, June 1st and closes promptly at 23:59:59 UTC on Friday, June 12th 2026.

#council:fedoraproject.org)#mindshare:fedoraproject.org)#fedora-forgejo:fedoraproject.org)#admin:fedoraproject.org)#badges:fedoraproject.org)#apps:fedoraproject.org)#join:fedoraproject.org)#mentoring:fedoraproject.org)While I could not make it to the Fedora Council through elections in the previous term, I was chosen as a Mindshare Representative from the second half of the term. Besides bringing the interests of the Mindshare Committee to the Fedora Council Strategy Summit 2025, I also worked on further progressing the Fedora Forge Community Initiative with my work on the private issues and Pagure Migrator. Staying true to my volunteer first community agenda, I also worked on the documentation for the Fedora Project Contribution Model to encourage empathetic interactions and managing expectations in our mostly volunteer driven community.

Following the elector concerns from the previous term, I explicitly reinforced just how important it was for us to leverage objective community health metrics over arbitrary conditions that I voiced during the foundational drafting of the Fedora Verified proposal. With my inclination towards process sustainability, I have often voted for deferring decisions until we have accounted for most (if not all) feedback. I have had a hands-on approach towards major proposals like Fedora Forge, Image Mode, FedoraCVE trademark and Fedora Verified, by spending roughly around ten hours per week simply reading through the Fedora Discussion threads.

Besides my direct involvement in the Fedora Council, I am also working as an infrastructure architect, working on various projects in the Fedora Infrastructure and leading the Fedora Badges Revamp Project while mentoring an Outreachy intern. Being elected into the Mindshare Committee has also allowed for me to lead the logistics of various Fedora Project in-person representations at APAC events like DevConf.IN 2026 and FOSSAsia 2026, all while curating and guiding Fedora Project community events across the world. In my past life (or roughly five years back in human years), I also led the Fedora Websites and Apps community initiative.

One of the first things that comes to my mind about risks for our community is decision transparency. The discussions [1] [2] around the AI Developer Desktop Proposal were a valuable learning experience as they showed how much community members care about understanding why we vote the way we do, not just what we voted for. I see an opportunity here for elected members to share their reasoning with their votes on a given proposal, so others can follow the thought process. After all, I would want to vote for someone who engages with what the community has to say and is willing to show their work, even through various disagreements.

To dive even deeper, we also risk alienating the community members by deciding on a certain proposal before it is theoretically ready to be discussed. While a stopgap opportunity here might be ratifying a proposal readiness guidelines document that the members could refer to, in my honest opinion, being active around the Fedora Project community platforms just ends up helping a lot more. If this requires us to spend more hours around the desk, have more frequently organized synchronous meetings, include more electable Fedora Council seats or set regular Fedora Council social hours – then it is something that we need to account for.